Over the last 7 months, my flatmate has been running a sandwich shop called Sando Club (arguably the best in Bangalore). While I have no skin in the game, I am a persistent cheerleader, and I make sure that I get to sit in on some of the team’s marketing-related brainstorms that end up happening in our shared house’s living room. In these discussions, I am not without an agenda, though.

Of course, there is the utter joy of witnessing and contributing to a close friend’s emerging business. But beyond that, my goal in most of these discussions is to push the use of AI in setting up a new consumer enterprise from the ground up. In pursuit of this goal, I sometimes also whip up simple prototypes.

I don’t get to work with image and video AI at work, so this is also a good way for me to get hands-on with these capabilities. My friends really do treat the store as a baby, and in most cases, what I think of as good enough is laughable to them. This also gives me a pulse check on how high-taste brand marketers might respond to completely AI-generated assets right now.

The prototypes I put together over these months include a 77-page AI-written book, custom wrapping paper, cakes, postcards, and posters. Some turned out well. Others revealed how wide the gap between AI mockups and physical execution really is.

These experiences helped me realise that AI makes creative ideation nearly free, but craft and implementation remain hard.

This also has implications for brand and marketing in the future, including reallocation of labour to bringing things into the real world, and expanding their digital interactions with users to be mass-customised, hyper-personalised, and all encompassing.

What follows is a rundown of how each prototype went and what it might mean for brand marketing.

Extending The World in Print

Most brands build a world that lives on their social media. So does Sando Club. Some of the first things I worked on were objects that extended this world into fiction.

I used Claude Code to write a book that only exists in the Sando Club universe, and bringing Boss Man, a character from their Instagram, onto wrapping paper.

The AI-written book was the most comprehensive of all efforts. This project was also exciting since, after working on the e-books at Blume, this was my second time editing a book into creation.

We had a list of custom titles and covers, and the original idea was to print the covers and put them around regular books. In retrospect, that might have been easier, but I recommended we use AI to generate the actual content for these fictional books and get them printed.

Of the seven or so titles my flatmate shared with me, I finished only one copy. That, too, currently rests in a drawer in my room and not at the store. But regardless, there is something to be said about the process of getting it done.

As soon as we got started, I realised that the team had selected titles based on how funny they were, not on whether a full-length book could be written around them. This meant I had to reverse-engineer the process of coming up with a book and its title. I had to go back from the title to the best category of book to write around it.

I was determined to make sure that the book was something that one could at least skim through once and have a meaningful experience.

The title I chose to work on from amongst the seven he sent was“Calories Can’t Hurt You. But People Can.” I decided that this could be a good, almost ethnographic, social commentary book. If you are interested in checking out the full book you can view it here.

I used Claude Code to do the entire thing from idea to finished print-ready version of a 77-page book.

I started by providing Claude Code with a high-level Book Design Document (book_config.md). The process then broke down into four tasks:

1. Research – For this, the book design document included instructions and samples, and the task was to both find sources (research_links.md) and extract relevant quotations from them (/research_snippets).

2. Book Structuring – This was a collaborative effort. We iterated on outline_clusters.md until the chapter structure felt right.

3. Generation – Claude Code did much of this independently once the research and structure were complete, producing /chapter-text which was later converted into /chapters to use the latex template I provided.

4. Editing – This was the most human-in-the-loop step, though still minimal. I double-checked and manually edited the headings, subheadings, and highlighted quotes. I also used an evaluation_rubric.md for Claude to run a sub-agent review pipeline. Post this, everything compiled into full-book-latex.tex for printing.

Once I locked in the book category, I modularised the text into categories and types I could imagine being repeated across chapters. The Book Design document included descriptions and samples of these elements. I had to edit, though, to avoid repetition and over-reliance on the book design document.

Even after all the work I had done in preempting the need for content sources and research to avoid slop, it was incredibly challenging to come up with something coherent beyond 77 pages. This is also a very liberal page count since the book version printed includes a “Practice Manual” section, some empty pages for a “Journal”, and so on.

The real learning here was how challenging it is to print books in the real world. Stripe Publishing makes more sense to me now. Book printing really is a craft and a dying one.

77 pages are not sufficient for a mass-market paperback. I did not spend enough time considering the right paper quality, and since it was a single copy, the spine came out a little damaged and wobbly. I imagine book printing operations are not set up for small MOQs.

The wrapping paper was interesting because, though I wanted it to be in brand colors and fonts, the text itself did not exist.

I was amazed at how simple it was to take text samples from other content on their Instagram and plug them into ChatGPT with custom instructions to generate something that came pretty close to matching their design language.

The colors did change slightly with every generation. To get around this, I tried using Canva AI to paint in the brand colors, but it didn’t work well. I also tried plugging in the RGB code for the brand colors, but it’s hard to say whether it followed it. In the end, I went with what felt good enough to my untrained, non-brand expert naked eye.

Here again, though, my limitation in getting things printed came through.

Once I had the text, I had to place it on Canva, sized to be a wrapping paper. Though I have done this before, I messed up the corner placement, which is critical for wrapping paper because it wraps around. I don’t know if this is conventional wisdom, but it has been relevant in my experience.

From Paper to Flour

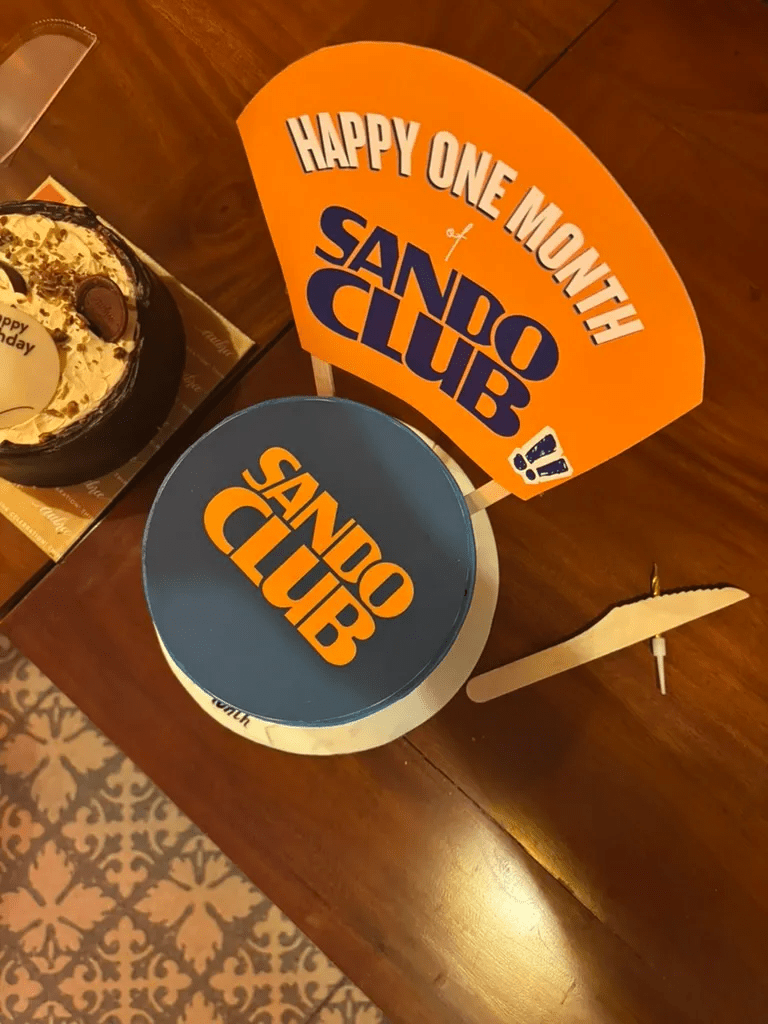

Considering Sando Club is a food brand, it made sense to try something on the food front. They do a much better job of food customisation than I ever could, and if anything, these little cake-printing rendezvous showed me why.

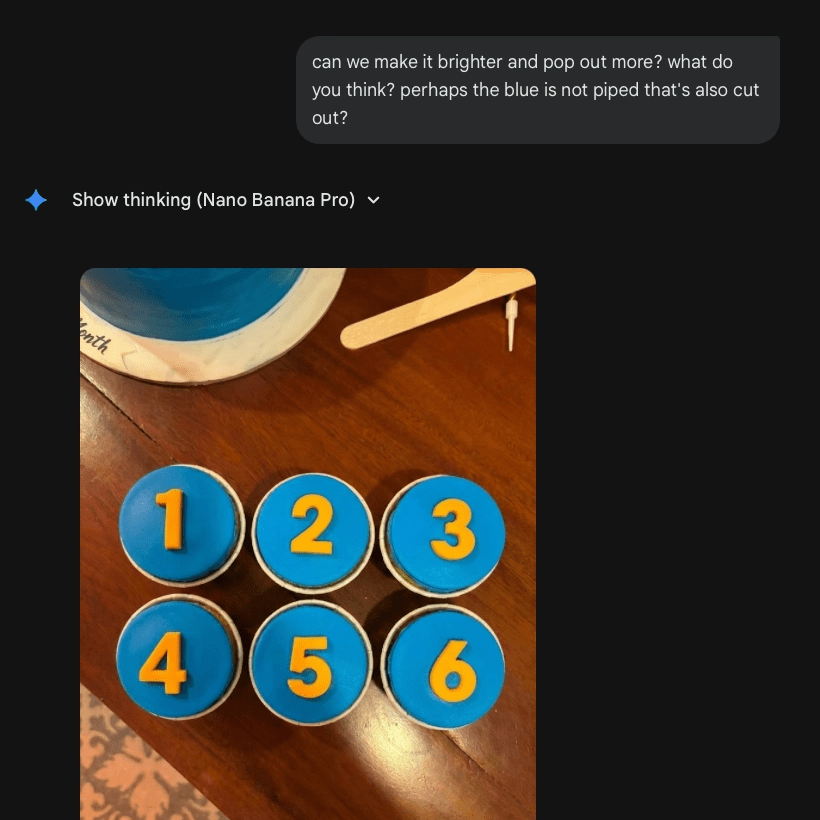

The first cake was simple. It was blue all around with their blue and orange logo printed on top. For the second, I wanted to get 6 cupcakes for an event, with the numbers 1 to 6 written on each. This was the one that proved to be a bigger challenge.

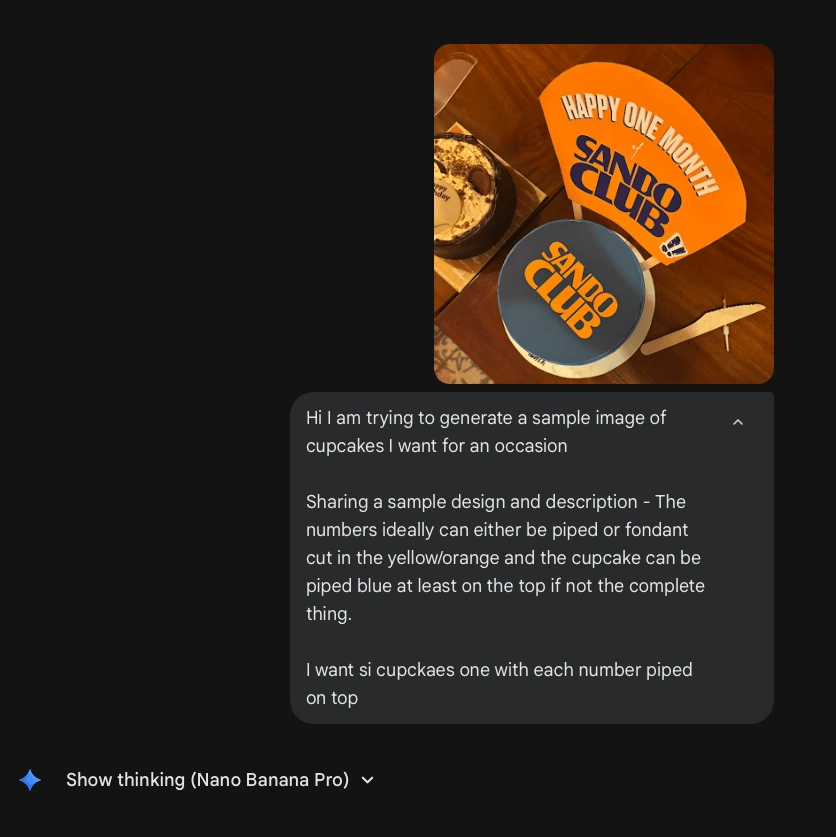

I shared a text description with the bakery over WhatsApp, and they sent back an AI-generated image as a sample. This was not good, and worse, mostly from the free version of the ChatGPT image-generation service.

So I took the exact message I had sent to the bakery, along with a sample of the previous cake, and put it into Nano Banana Pro. It did a wonderful job.

All was well and merry until the cupcakes arrived, because the image-to-flour transition also showed me how little I know about cake design.

I could visualise what I had in my mind’s eye, and the image generation itself was pretty accurate, but the design I described and the sample I had in mind were both clearly too simplistic for what should be on a ‘cupcake’.

I also only generated a top-view image, and my instructions to the bakers had gaps in them.

In the end, the cupcakes were not custom-baked as much as regular cupcakes from their store, topped with the custom topping made exactly to spec from the image I shared.

Fair to say that the gap between AI-generated reference and real-world execution is real. And so is the detail required in human-to-human communication. AI makes things beginner-friendly, but it doesn’t teach you the levels of detail involved in a craft you don’t know.

Across All Visual Touchpoints

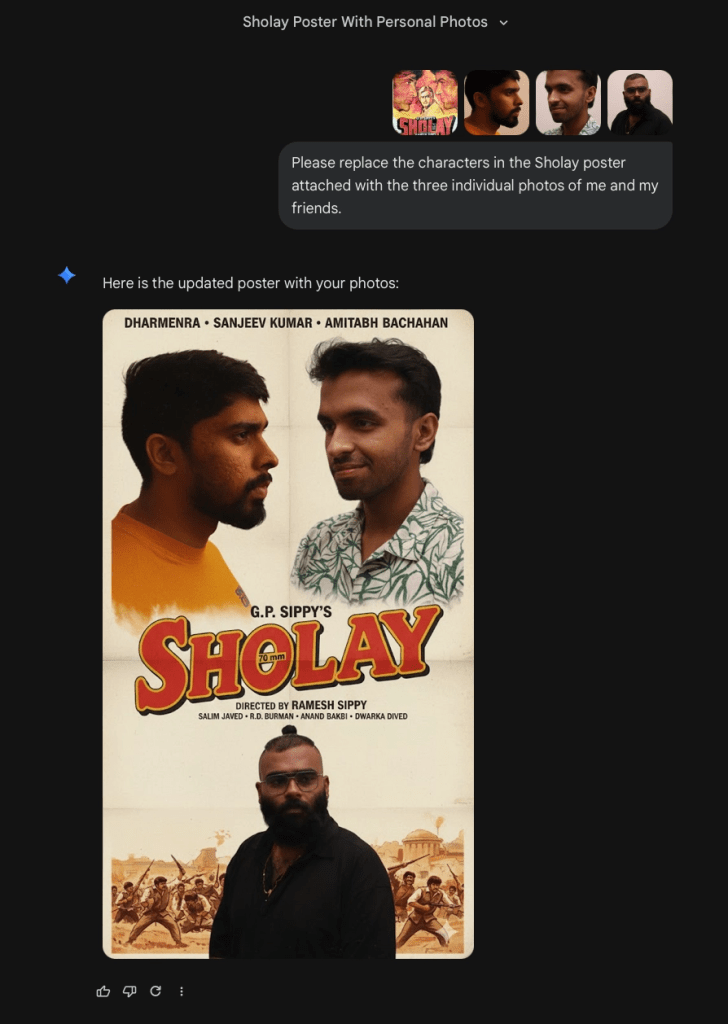

The idea here was to take existing brand assets – menu items, the founder’s face, content from their Instagram – and burst them across all visual touchpoints. Remix popular culture so proactively that all media artefacts evoke the brand.

That was the inspiration, at least. These were small illustrations of how it could happen.

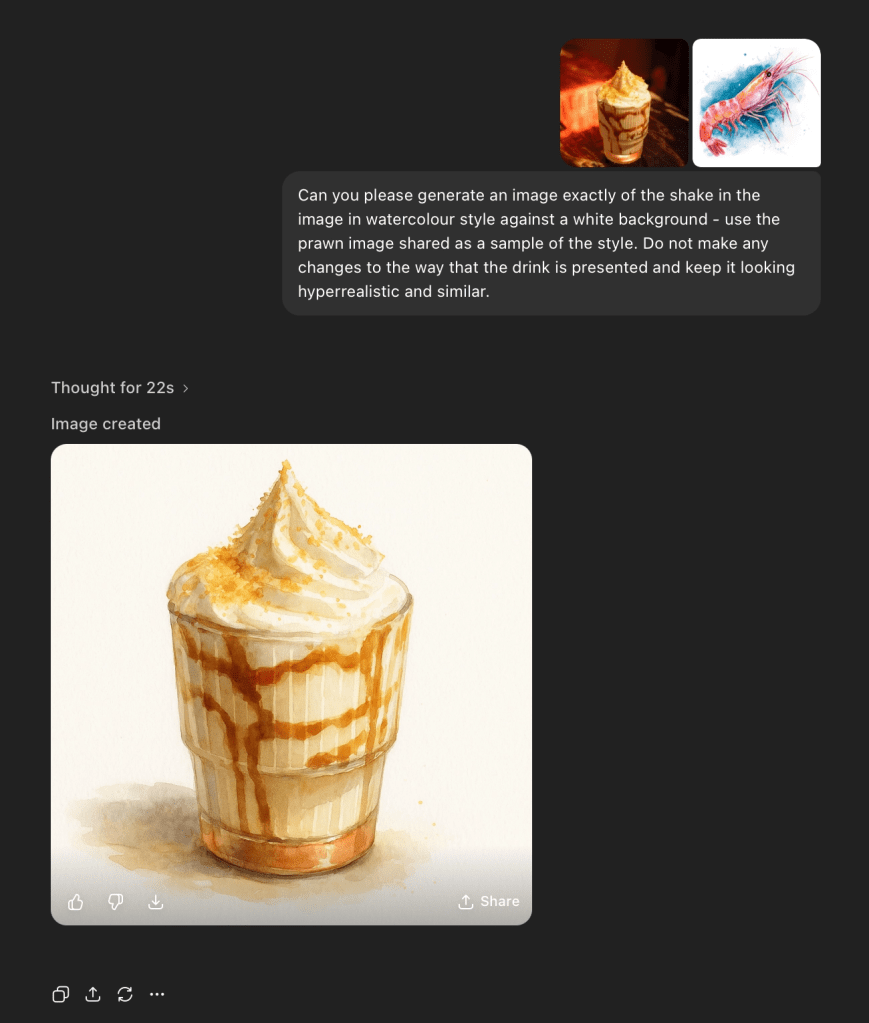

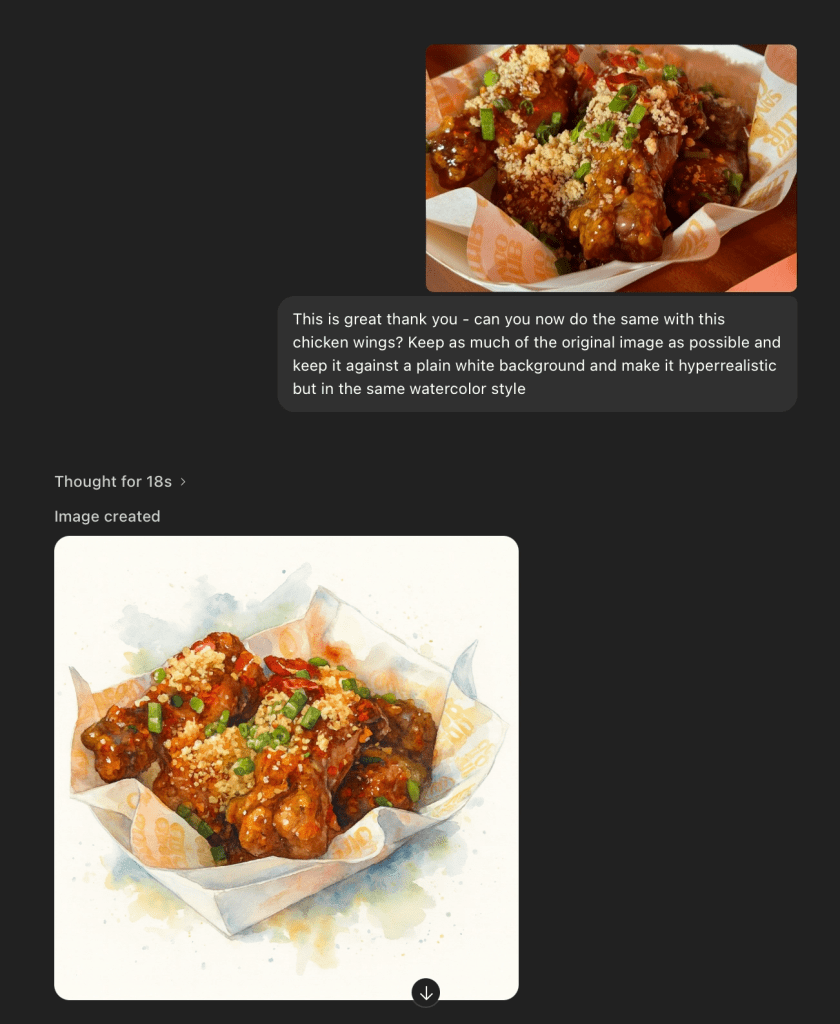

I mainly generated Postcards and Posters, and for both, I used Nano Banana Pro or the ChatGPT Pro version of Image Generation.

For the postcards, I used images from their Instagram, prompted image generation models to turn them into watercolor-on-paper illustrations, and then used Canva AI to remove backgrounds and erase unwanted elements.

I got these printed from Printo, too, and unlike the book and wrapping paper, these turned out well.

This was also because I did not attempt to change the perspective of the photographs much and went with whatever perspectives had already been captured by the brilliant Photographer on the Sando Club team. 1

On the other hand, perspective was a bigger bottleneck for the posters. They were for a collaboration announcement and the team was hoping to play with a classic movie poster for this. The task was to replace the faces of the actors in the poster with the faces of team members from the two restaurants.

This was a challenge because I had limited photos of the two teams. The first few attempts at these turned out a little too mechanical. Image models can be a bit dunce-y about the physics of the world until actively prompted, and so I did what I could to get around this by using iterative prompting.

I still believe, though, that the limitation was my ability to articulate what I am seeing in my mind’s eye in words and to provide enough samples or starting points for the model to go off of.

The team did end up just working hard on it and creating a poster which was 100% human – human imagined and human crafted. Who knows what was lost or gained in that. The poster did well though and the collaboration even better.

Some Closing Thoughts

1. Mass Customisation is finally practical.

Pine and Gilmore, authors of The Experience Economy (2011) introduce mass customisation as “efficiently serving customers uniquely, combining the coequal imperatives for both low cost and individual customization present in today’s highly turbulent, competitive environment.”

Hyperpersonalisation of experiential offerings is often limited by cost and effort, but with AI, these are lesser barriers.

Companies can create greater value for each individual by focusing on the exact combination of features or benefits they desire, without incurring high marginal labour costs.

And in our world of high dopamine baselines, designing an experience for the average, not the individual, is what keeps a good but ok experience from becoming one I will recommend to others.

2. Design Systems expand to Marketing.

Design systems have traditionally been about visual consistency and focused on product design. The content of marketing campaigns has been treated as the more creative, variable work that must come from whacky, vibey Marketers. But generative AI changes this.

A brand’s vibe can now be captured in samples and prompts, then infinitely recreated through these models.

The Book Design Document I used for the book, the Instagram samples I fed into ChatGPT, these are early versions of design systems that cover not just how a brand looks, but how it speaks and creates.

3. Brand metaverses will rise.

With AI, brands can expand the scope of their influence in their customers’ real and virtual lives. Hyperpersonalised and mass-customised brand artefacts make customers feel they belong to a group larger than themselves.

I can see a future where brands create custom worlds for themselves and their customers, complete with characters, stories, content, and custom interactions. Essentially metaverses.

Since working on these prototypes, I have been asking people if they would watch their favourite movies with the characters replaced by their friends and loved ones. Most say yes. I have not made one yet to see if this holds.

AI books get more mixed reviews, though. Most folks are wary of AI slop, and some raise the question of ‘AI authorship’ and thence dismiss the content entirely.

There is merit in these complaints. There has always been high and low culture, especially in literature, and AI-generated books definitely face an uphill battle to be considered of high taste.

But considering how vast popular culture has become, I am still bullish they will take off. Not because AI will write wonderful books necessarily, but because AI will make it easier to write books that every individual person would want to read.

4. The real world is harder than the virtual.

My main learning from these prototypes is that bringing things into the real world is hard. There are so many tiny things that you don’t think about as things materialise out of the virtual sphere. But this is also an opportunity.

Brands can use AI to automate some of the more intellectual creative ideation and focus their human energy on the implementation and craft of the real world.

As AI makes it easier to generate immersive virtual experiences, the divide between the virtual and the real will only widen. Brands can now determine where they want to sit on this spectrum in a more meaningful way than “the internet gets us better reach.”

- You must check out his extended work on Instagram at @artbysharon_. ↩︎

Thank you to Jassil, Aditya and Suryansh for reading early versions of this and sharing feedback.